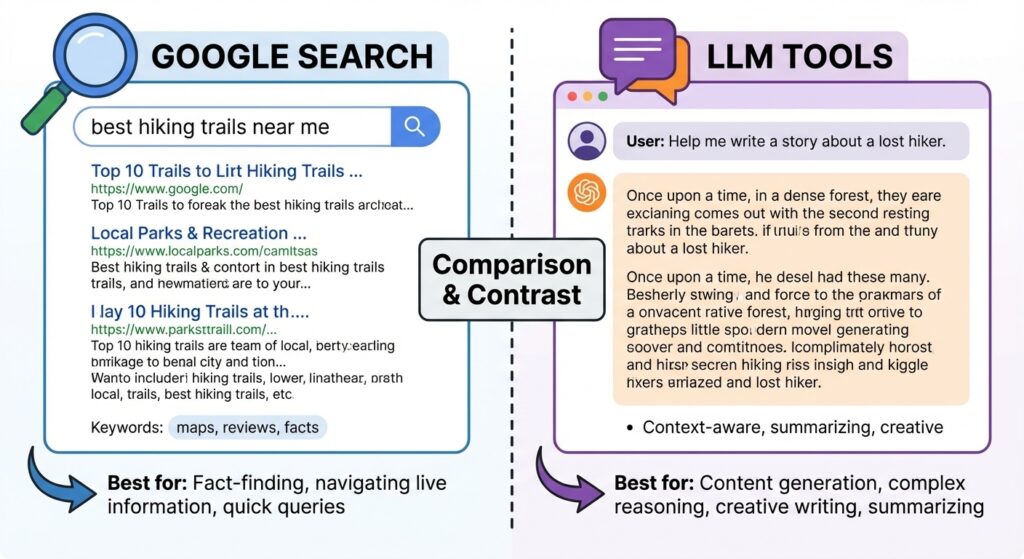

Google Search vs. LLM: When to Use Each Tool

You probably use Google every day. But have you tried using an LLM like ChatGPT, Perplexity, or Grok for research? If not, you might be missing a powerful option.

Search Engines Excel at Quick Answers

Google and other search engines are fantastic for straightforward queries. Need to know today’s weather, your kid’s school calendar, or the date and location of an upcoming industry conference? A search engine gets you there fast.

Search engines also work well when you need in-depth information from a few trusted websites. You might search, skim a couple of reputable results, and find your answer without extra effort. There’s minimal synthesis required—you’re retrieving specific information from known sources.

For simple questions, this approach is efficient. Learning how to treat a fever? One or two medical websites will likely have what you need, and reading more won’t add much actionable value.

LLMs Shine for Complex Research

Sometimes, though, research demands more. Imagine you’re trying to understand a rare disease diagnosis and treatment options. Or your house foundation needs work, and you need to understand the problem before calling a contractor. At work, a new competitor enters your market, and you need to understand their positioning and potential impact on your business.

These scenarios require checking multiple sources and synthesizing the information into a coherent understanding. Traditional search means opening many tabs, taking notes, maybe creating a document to track your progress. You might discover related topics and open more tabs to explore them later.

LLMs change this equation. They’re built to synthesize information across multiple sources and adapt as you refine your questions. Instead of you juggling tabs and notes, the LLM handles the heavy lifting.

How LLMs Work (And Their Limitations)

LLMs generate responses based on patterns learned from their training data rather than searching the web in real-time. However, LLMs typically start with fewer resources per query to conserve computational power, meaning you may need to ask follow-up questions to get complete answers.

This isn’t a weakness; it’s a feature of the interaction model. You need to engage with the LLM: clarify your questions, ask related follow-ups, and push back if results don’t match your needs. Sometimes rephrasing a question differently will unlock the answer you’re seeking.

Don’t be afraid to tell an LLM that its response missed the mark. This isn’t a sign the tool is broken—it’s feedback that your phrasing and the LLM’s interpretation didn’t align. LLMs can be stubborn in how they interpret requests, so clearly articulate what you wanted and why the answer fell short.

The Hallucination Problem (And How to Fix It)

You’ve likely heard that LLMs sometimes “hallucinate”—providing confident-sounding but inaccurate information. This happens because LLMs are programmed to provide answers, even when uncertain. That’s why it’s critical to push back on information you receive and ask for sources.

The best practice? Ask multiple LLMs the same question. Compare their responses, verify claims, and use disagreements as a signal to dig deeper. This approach—pitting LLMs against each other—helps you catch errors and build more accurate, well-rounded research.

Swa Makes Multi-LLM Research Seamless

This is where Swa changes the game. Instead of logging into multiple platforms and manually comparing responses, Swa lets you access multiple LLMs in one place.

Here’s how it works:

- Ask your question to ChatGPT and refine the interaction until you have a solid response.

- Ask Perplexity to evaluate what ChatGPT provided and add refinements based on your earlier questions.

- Ask another LLM to synthesize all responses into one cohesive answer—no repetition, just clarity.

With Swa in Slack, you do all this in one conversation. Answers from different LLMs appear in the same thread, eliminating the need to hop between apps and manually compare results. You spend less time managing tools and more time analyzing what the LLMs uncovered to identify gaps or additional research needed.

Even when you’re an expert on a topic, Swa can serve as a knowledgeable editor—helping you spot advancements you’ve missed or verify that your knowledge is still current.

The Bottom Line

Search engines excel at straightforward, specific queries where you need quick answers from known sources. LLMs offer an evolving, conversational research tool that can tackle intricate, multifaceted problems by adapting to your needs mid-research.

The combination of both—search for quick facts, LLMs for complex synthesis—brings efficiency to your research process. You spend less time gathering information and more time analyzing and applying what you learn. That matters, whether you’re solving problems at work or at home.

Ready to experience multi-LLM research without the friction? Try Swa free and see how multiple perspectives streamline your research.

Guess what? I didn’t use ChatGPT, or Claude, or Grok to write this. I used all of them with Swa! That said, these are my words, my sentiment, and my promises. Human-in-the-loop AI, that is what Swa is about. Empowering everyone to do more, and better, in less time.

– Mike Sirchuk, Founder of Swa